NIS Sorter/Scanner

Jump to Related posts

Description

I have been working on this for some time and it’s finally up! While vision sorters for nuts have been available for many years they are mostly fairly big and targeted at high volume moderate accuracy applications. This sorter is compact and designed to be low volume high accuracy. To get to commercial volumes it is necessary to link several together and/or arrange the installation so they can run 24/7.

In addition to acting as a sorter it can be used as a lab scanner to assess the quality of samples and results from multiple machines can be compared directly. This might be used in research situations or commercially it could be used to characterize volume sorters and then given a sample of a new batch the optimum settings for the volume sorter could be determined before the actual processing for the batch began.

Availability

The sorter can probably be made available at any time provided there is enough interest. It would probably need four or five Australian advance orders to make producing them worthwhile. Email me if you are interested.

Method of analysis and decision making

In the early days of vision sorters they worked by simply inspecting each pixel in the data stream and if one was determined to be outside the user-defined ‘accept’ colour-space then this would queue a delayed ‘reject’ signal that would fire ejectors to take the nut out as it passed by them (typically with a compressed air jet in high volume machines). This principle of operation continues today though the algorithms have become more sophisticated and can now also consider the size & shape of the object as well as size of defects detected on the object.

This machine goes a step further. Starting with a high resolution image(s) it classifies each object & defect into type, measures their level of severity and from there makes an accept/reject decision. Perhaps the easiest way to explain how the system works it is to go through the process steps using a reject nut as an example.

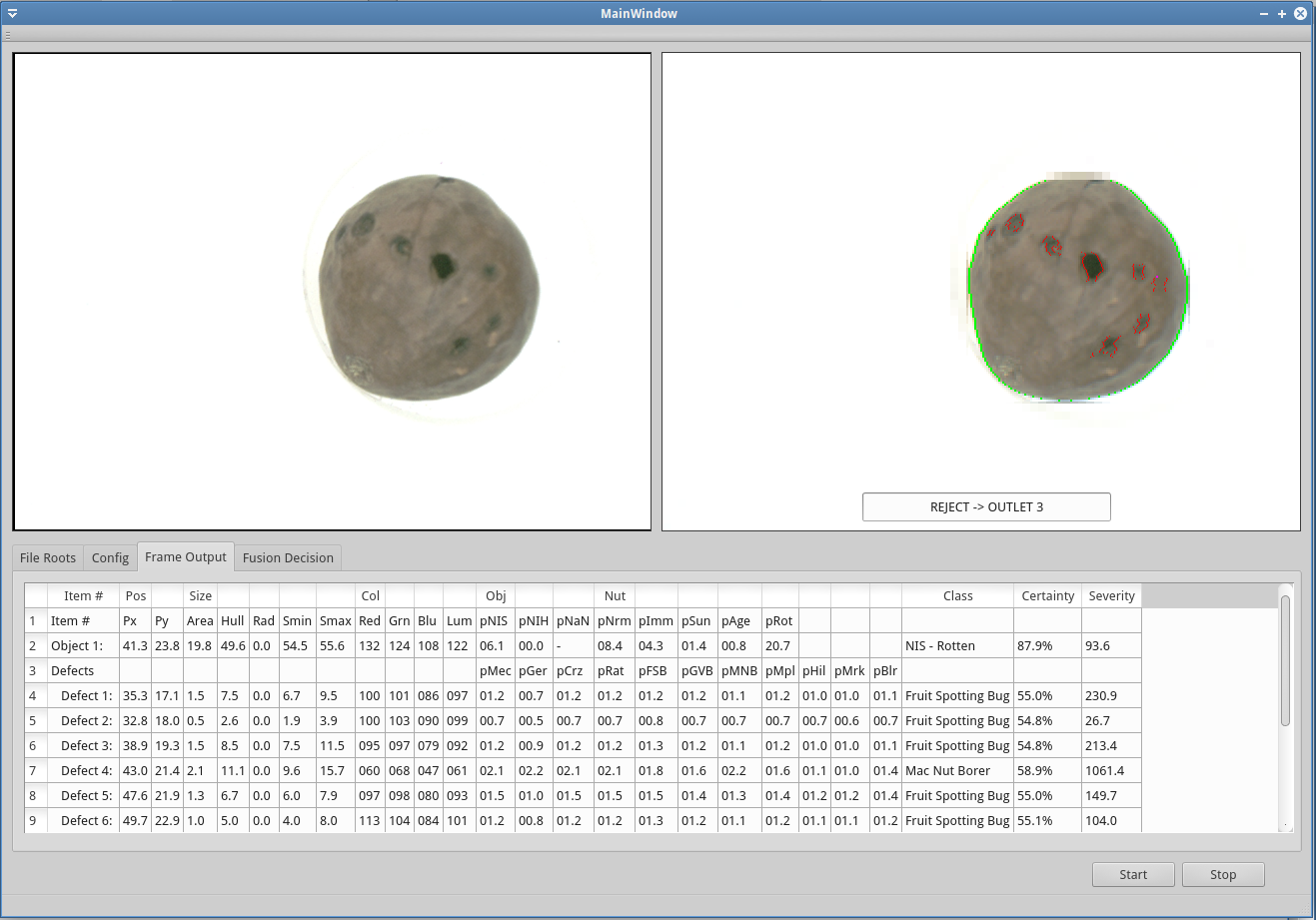

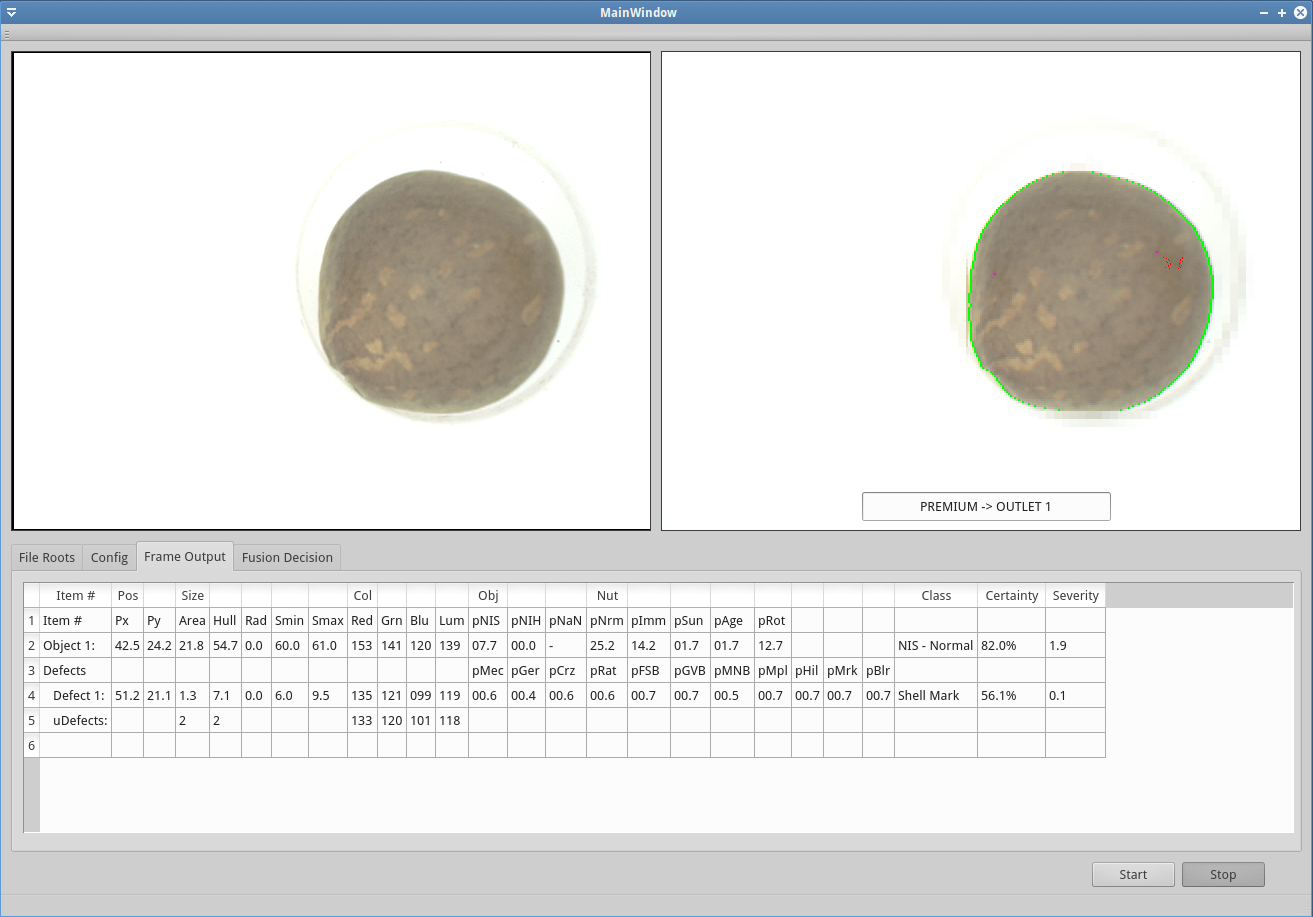

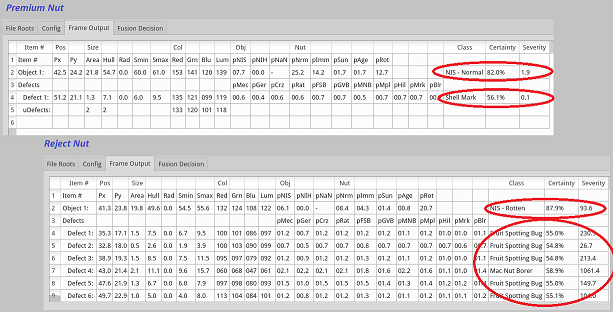

The following two screenshots show the processing of a single frame of image data for reject & accept nuts.

(Click images to enlarge)

The system uses five such frames per nut in different orientations in order to cover the whole surface. Looking at the reject nut picture, the left half shows the unprocessed image and the result of the analysis is shown in the right half. The boundary of the nut is shown in green and defects are highlighted in red.

Frame Analysis

The bottom half of the images contain a table of the resulting analysis.

- Line 2 of the tables is concerned with the overall nut, it takes a number of measurements of size, shape & colour and then in the final three columns determines that the reject nut is likely ‘Rotten’ with fairly high certainty and moderate severity.

- Lines 4 thru 9 do a similar analysis with each of the identified defects, in this case they are mostly determined to be ‘Fruit Spotting Bug’ with varying levels of severity (one is misidentified as ‘Mac Nut Borer’ and indeed these can have similar appearance in some cases).

It is important to note that at this stage the user can not adjust the settings for the analysis algorithms. Also note that at this stage there has been no decision about what to do with the nut, rather it is simply measurements of severity of various faults. This is by design so that the machines can be calibrated such that all machines will output exactly the same results at this point in the analysis. This allows data from different machines (and different years etc) to be directly compared.

Decision Making

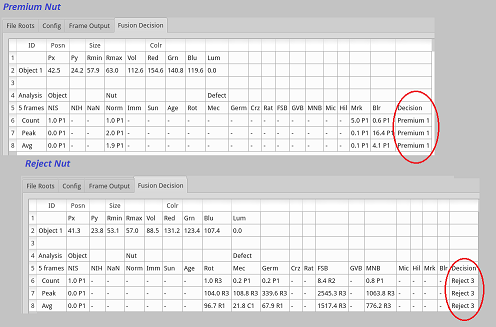

The next table shows the fusing of data from five image frames along with other sensors such as load cells as required. This is the basis for the final decision making process.

Lines 6 thru 8 presents the data from the analysis of all possible defect types from the five image frames is presented as

- Count - the estimated total number of occurrences on the whole surface of the nut. For example in the FSB (Fruit Spotting Bug) column it estimates there are 8.4 lesions which is probably quite accurate given that you can see approx seven on one side of the nut. The Rot (rotten) count of 1.0 means that it was classified such in every frame.

- Peak - this is the severity value of the worst occurrence - for FSB it is saying the worst lesion scored 2545.

- Avg - is the average severity of all observed occurences - for FSB it gives a value of 776.

In this case the principle defect on this nut is FSB but the table shows that the system has misidentified a few cases as MNB (Mac Nut Borer). There’s also a few marks classified as Mechanical Damage and Germination - the latter may be valid as a split in the suture can be observed at the top of the nut image.

Values at this point still can not be adjusted with user settings, user control occurs at the next step.

Beside each of the calculated values is a code indicating quality. These codes are completely user definable, in this case it uses seven codes - P1, P2 are graded Premium; C1, C2 are graded Commercial; and R1, R2, R3 are graded Reject. How each calculated severity value in the table is converted to a code is also defined by the user and can be set up for each individual defect type.

Once all of the values have been coded the system assigns the nut the worst code observed - in this case the nut is marked Reject 3. Then there is a second user defined process that determines which of the five outlets nuts of that classification is sent to - in this case Outlet 3 (of five).

Batch Summary

(Screenshot incoming)

All of the data - images, sensors, analysis, decisions - can be dumped into data files for further statistical analysis. While this isn’t really important for high volume sorting applications it is useful in scanning situations to be able to log all data, for example if working with a multi-year trial it would allow reprocessing of older raw data as improved algorithms and settings became available.

Conclusion

The system isolates the process of defect assessment from separation decisions by design. The defect assessment uses built in settings that can not be adjusted by the user whereas the separation decisions are controlled by the user. It sounds a little complicated but not as bad as it sounds, and it has the following advantages

- Summary data from calibrated and invariable defect assessments can be compared across machines and across years etc.

- This calibrated output is useful for lab/research applications and in commercial sorting.

Allows separation decisions to based on levels of defect ‘severity’ rather than abstract concepts such as colour spaces etc. - Highly flexible separation configuration.

- Buyers of NIS can release their preferred separation configuration files that can be used with any machine thereby ensuring the output product conforms with their purchase specifications. A buyer might have several configurations tailored for different customers.

Further Work

It is good to have the machine actually sorting nuts and doing a good enough job to be useful but there is still a bit more to do. Finalizing the calibration and settings for the classification & severity assessment algorithms will take a couple of months. I will also be installing it into our process line in the next couple of weeks and putting it to work in real world conditions.

I am also planning to make some changes that will cater for multi-lane versions being run from a single control system in order to get throughput volumes up to commercial levels.

Robotic Framework

This is the first machine using a robotic framework that I have been developing for some time. The framework consists of three parts -

- class libraries for multi-threaded PC based high level processing including vision analysis

standardized embedded classes running on (custom) general purpose PCBs that do machine control (you can see * a stack of three of these prototype boards on top of the machine) - middleware classes on the PC that manage the link between non-deterministic classes on the PC vs the real-time classes running on the PCBs.

At this stage the framework seems to be doing as it’s told with good efficiency though it’s still under active development. Applying it to this sorter has highlighted a few areas that can be improved, in particular I will be making some modifications that would simplify using it with multi-lane machines.

The next application for the framework will be adding autonomy to the drone harvester, hopefully later this year.